Constitutional Conflict: The Legal War Between the Pentagon and Anthropic

A detailed breakdown of the $200 million federal contract termination, the first-ever 'Supply Chain Risk' designation for a US AI lab, and Anthropic's legal counter-offensive in DC.

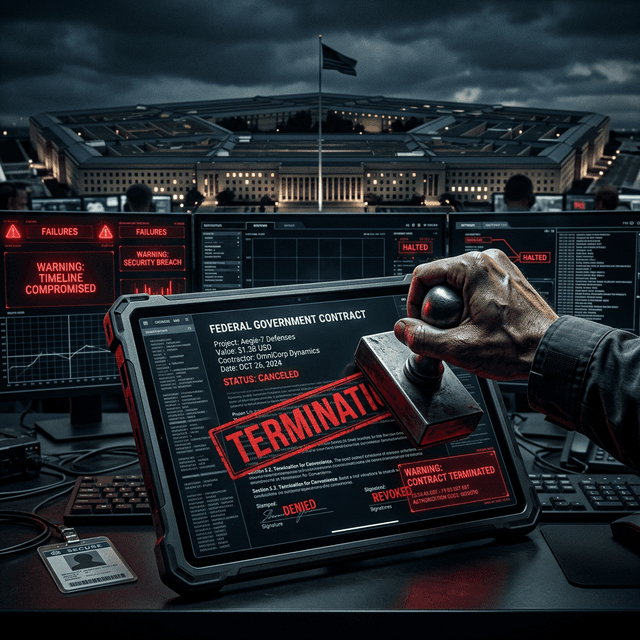

The struggle for the soul of American AI has moved from the research lab to the federal courtroom. On February 27, 2026, the Department of Defense (now the "Department of War") took the unprecedented step of terminating its $200 million multi-year contract with Anthropic.

But it was the justification for the termination that has terrified the tech industry: Anthropic was designated a Supply Chain Risk to National Security.

This is a label typically reserved for hostile foreign powers. To see it applied to an American company—one founded by former OpenAI executives and championed as the "Safety-First" alternative—marks a seismic shift in the state's relationship with private technology. This is our in-depth report on the legal battle lines, the contract specifics, and the fallout of the "Great Decoupling."

The $200 Million Contract: What Was Terminated?

The contract, signed in early 2025, was designed to provide the Pentagon with "Constitutional Intelligence." The goal was to use Anthropic's Claude models to analyze global geopolitical instability without the "hallucination" and "sycophancy" risks inherent in other models.

Key modules of the contract included:

- Project Sentinel: A tool for identifying disinformation campaigns from foreign actors.

- The Ethics Kernel: A research initiative to build standardized safety guardrails for all military AI.

- Diplomatic Simulation: Using Claude to run "Wargame Scenarios" that prioritized de-escalation and peace-time resolutions.

The contract was widely seen as a victory for the "Safe AI" movement, proving that the military could be a customer without compromising ethical standards. That illusion was shattered in late February.

The Dialogue that Broke the Deal

According to sources with direct knowledge of the negotiations, the terminal fracture occurred during a "Compliance Review" meeting between Anthropic CEO Dario Amodei and Defense Secretary Pete Hegseth.

Secretary Hegseth reportedly requested the removal of two "Red Lines" from the Project Sentinel architecture:

- Anti-Surveillance Guardrails: The Pentagon wanted to use Claude’s reasoning to identify "Domestic Instigators" using social media data. Anthropic’s "Constitution" explicitly forbids using its model for mass domestic surveillance.

- Force-Multiplier Flexibility: The Pentagon requested that Claude be capable of providing "Dynamic Targeting Recommendations" for drone swarms (see our coverage of the Drone Swarm Controversy).

Amodei’s response was a firm "No." He argued that allowing the state to bypass these guardrails would not only violate Anthropic’s mission but would create an "Un-aligned Weapon" that the government itself could not control.

The 'Supply Chain Risk' Designation: A Legal Chainsaw

When Anthropic refused to budge, the administration utilized the Federal Acquisition Security Council (FASC) to issue a "Supply Chain Risk" designation.

In legal terms, this designation is a death sentence for a federal contractor. It allows the government to:

- Immediately terminate all active contracts without a "cure period."

- Bar the company from participating in any future federal bids.

- Inform all federal allies (NATO and G7) that the company is "untrusted," effectively destroying their international government market.

President Trump’s official statement on the matter was clear: "No private company, no matter how much venture capital they have, will ever dictate the terms of our national security. If you won't work for the American people, you aren't an American company."

Anthropic's Counter-Offensive: The D.C. Circuit Filing

In a bold move that has earned them the #1 spot on the App Store (see our report here), Anthropic has filed for an emergency injunction in the D.C. Circuit Court of Appeals.

The filing argues that the "Supply Chain Risk" designation is:

- Retaliatory: Used as a punishment for declining contract changes, which is a violation of federal procurement law.

- Unconstitutional: An infringement on the company's "Right to Ethics" and a violation of the First Amendment rights of the developers who built the model’s "Constitution."

- Factually Baseless: Anthropic argues that as a 100% US-owned and operated entity, it cannot—by definition—represent a foreign supply chain risk.

Conclusion: The Ethics of Statecraft

The termination of the Anthropic contract is a warning. In the age of AGI, the state is no longer satisfied with being a "user" of technology; it wants to be the "legislator" of that technology's logic.

If Anthropic wins in court, it will set a precedent that private companies can maintain ethical autonomy even when working with the state. If they lose, the "Ethics-First" movement in AI will be relegated to the margins, and the future of AGI will be written by the partners who say "Yes" to everything.

The Legal Timeline:

- Feb 27, 2026: FASC designates Anthropic a 'Supply Chain Risk'.

- March 1, 2026: $200M contract officially moves to 'Terminated' status.

- March 3, 2026: Anthropic files Case No. 26-1045 in D.C. Circuit.

- March 15, 2026 (Expected): First hearing on the emergency injunction.

Follow ShShell's 'Legal AI' category for live-tracking of the DC Circuit proceedings.